StepFun

StepFun

Foundation Model Post-training Intern

Worked on reward modeling and RLHF pipelines for Step series models.

I am a senior undergraduate majoring in Computer Science at Shenzhen University. I am currently seeking PhD and research internship opportunities in efficient inference for large models. If you are interested, please feel free to email me.

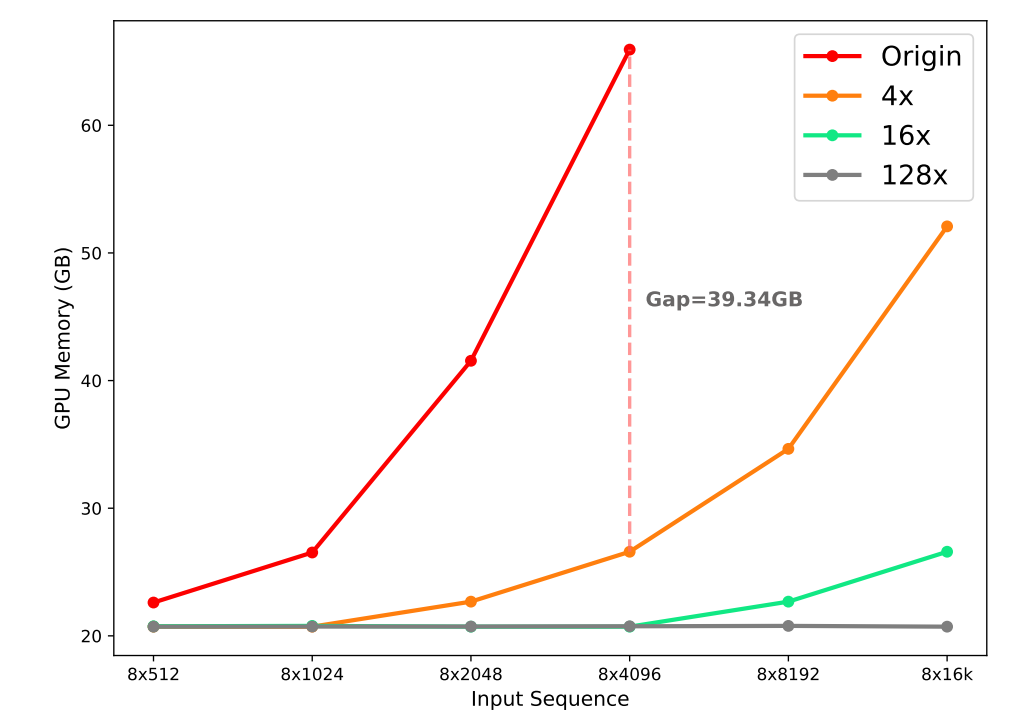

I am interested in context compression for LLM-based agents and next-generation efficient model architectures.

My previous work focused on large model pre-training, efficient inference, and multimodal AI companions.

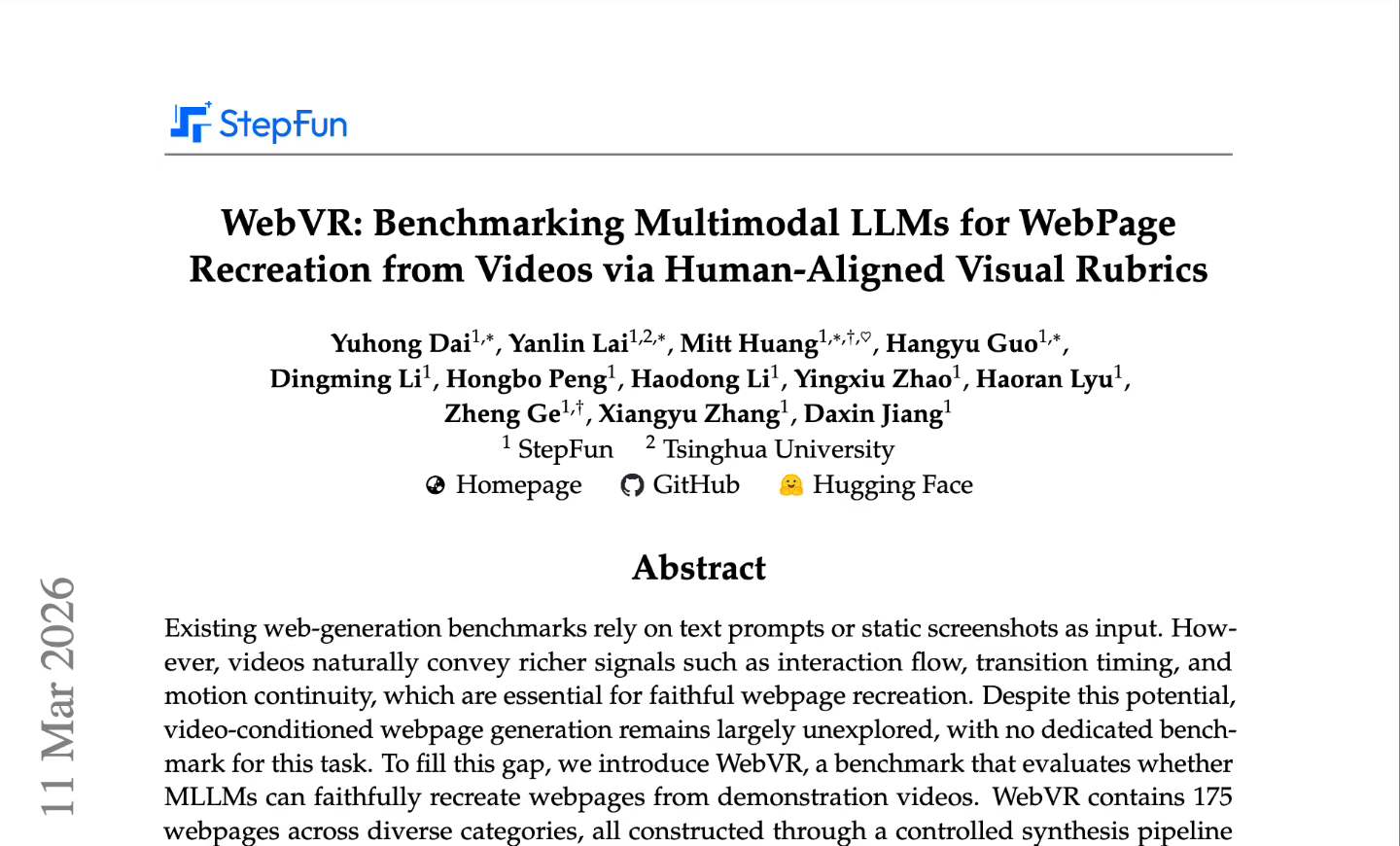

* denotes equal contribution. Full list on Google Scholar.

StepFun

StepFun

Microsoft Research Asia

Microsoft Research Asia

Tencent

Tencent

RoboMaster

RoboMaster